This section summarises changes to data collections, calculation methods and other factors which have had an impact on the FE statistics we publish and how this affects their comparability over time. Annex 2 provides information on FE policy developments which, to some extent, help to explain these changes.

Apprenticeships

Sector subject area update for the 2025/26 academic year

The apprenticeship sector subject area (SSA) data published since January 2026 is based on updates (opens in new tab) which were introduced in December 2025: the SSA codes for 139 apprenticeship standards were updated by Skills England to align with the latest Ofqual guidance. DfE calculations of SSA data for the 2025/26 academic year onwards are based on these updates. We have also calculated figures on the same basis for the previous five years, to provide a consistent timeseries of data for comparison. SSA figures for apprenticeships and overall further education and skills (since they include apprenticeships) therefore may not match those released in previous publications.

Changes to published data for the 2024/25 academic year

Although there have been no changes to how apprenticeships data was calculated for 2024/25 academic year, there were two changes to the data we publish. From 2024/25, we have ceased publication of the public sector apprenticeship statistics and no longer publish monthly updates on apprenticeship starts.

The statutory target for public sector apprenticeships ended on 31 March 2022, however we continued to collect and publish this data. Given the burden placed on public sector organisations to submit their employee and apprenticeship data to the department, we sought feedback from providers to establish the relevance of these statistics going forwards. Feedback confirmed that there would be no detrimental effect from ending the collecting and reporting of public sector apprenticeships data. The department has therefore ceased collection and publication of this data from 2024-25. The final release of public sector apprenticeship statistics in this series was published in November 2024.

As the result of a review of the use and frequency of our statistics releases, we have ceased publication of monthly updates on apprenticeship starts. However, information on apprenticeship starts by month continues to be included within the accompanying dashboard and underlying data of the quarterly publications.

Since November 2025 we have included a separate Local Authority category for provider type. The local authority provider type was previously grouped within the ‘Other public funded i.e. LAs and HE’ category, but user feedback indicated it would be more useful shown separately. The ‘Other’ category now includes HE, NHS Trusts, armed forces, government departments and other public bodies, and does not include local authorities.

Apprenticeships during the COVID-19 pandemic

COVID-19 restrictions led to a fall in the number of apprenticeship vacancies being advertised, some employer failure and redundancies, which in turn led to a fall in the number of apprenticeship starts. In particular, the months from March to October 2020 saw a substantial reduction in the number of starts. As a result, the 320,000 apprenticeship starts seen in both the 2019/20 and 2020/21 academic years was some 70,000 below the level seen in 2018/19. However, as the impact of COVID-19 receded the number of starts recovered and by 2021/22 stood at 349,000.

The COVID-19 pandemic disrupted learning, leading to some learners taking extended breaks. We therefore changed the method used to identify new starts for the 2020/21 academic year, to take this disruption to learning into account: learners who returned in 2020/21 after extended breaks were counted as continuing, rather than as new starts. This method was applied in 2020/21 only and for all other years learners who take extended breaks and return to a programme in a different academic year are counted as a new start.

COVID-19 related restrictions delayed the completion and awarding of some apprenticeships, therefore care should be taken when comparing achievements between years during the covid-affected period. The overall number of apprenticeship achievements decreased noticeably in 2021/22, falling to a recent low of 137,000. This is explained mainly by the impact of COVID-19 and, to some extent, lower start numbers since the introduction of the apprenticeship levy, along with a general move towards courses of longer duration. As the impact of COVID-19 receded the number of apprenticeship achievements began to recover however, with over 162,000 recorded a year later in 2022/23.

Starts supported by Apprenticeship Service Account (ASA) levy funds

In the January 2020 Apprenticeships publication, the ‘Levy-supported starts’ measure was renamed as ‘Starts supported by ASA levy funds’, to better describe what we are counting. We also changed the method for calculating these starts:

Prior to January 2020, the number of levy-supported starts was based on a match between Individualised Learner Record (ILR) starts data to information in an organisation’s ASA – this was known as the ‘data lock’ and is essential for payment of levy funds.

However, this data match was not always timely, particularly during the early part of the academic year and led to an undercount of starts supported by the ASA levy. To improve the accuracy of the count for in-year starts we introduced an alternative method, which made use of a new ILR field that recorded the contract type an apprentice is funded through.

The change to the method for calculating starts supported by the ASA levy, means that data from 2019/20 onwards is not directly comparable with earlier years. A revised time series was made available for final end-of-year data, but not for provisional in-year data.

From 9 January 2020 the apprenticeship service was extended to all non-levy paying employers to register and use. The new ILR field was updated to include both levied and non-levied starts, which means a small number of non-levied starts are now included in the ‘Starts supported by ASA levy funds’ measure. This change affects data from the 2020/21 academic year onwards and although it makes only a small difference to overall start numbers, it should be taken into consideration when making comparisons between years.

The majority of the apprenticeship programme is now funded through the apprenticeship levy, although an organisation can choose to fund apprenticeships themselves.

Calculation of achievements on apprenticeship standards

A new method for calculating the date of achievement for apprenticeship standards, based on the ILR end point assessment (EPA) field, was introduced in January 2020.

Under the previous method, the leave month/year when the learning had been successfully completed and achieved was used as the learner’s achievement date. The completion and achievement dates were typically the same date in this case.

However, this is not true for the EPA and leave dates, with the EPA potentially being on a different date to the leave date; for example, a learner may have completed their learning in the 2018/19 academic year (and left the programme), but their EPA may have been in the following academic year (2019/20).

Achievement rates from 2019/20 onwards are therefore not directly comparable to earlier years.

Motor vehicle service and maintenance technician (light vehicle) apprenticeship standard sector subject area change

In 2019, the Institute for Apprenticeships reclassified the Motor Vehicle Service and Maintenance Technician (light vehicle) apprenticeship standard from the Retail and Commercial Enterprise sector subject area tier 1 to Engineering and Manufacturing Technologies. The new sector subject area tier 2 for the standard is Transportation Operations and Management. All data published from March 2019 containing sector subject area fields have been updated to reflect this change, including historical data.

Apprenticeships expected duration

Apprenticeship expected duration is the expected time (in days) required to complete a standard or framework. Two changes were introduced to the method for calculating the expected duration in November 2018.

Before November 2018, the duration calculation was based on the learning start date and either the actual end date or planned end date: in cases where the actual end date was not available, the planned end date of the apprenticeship (recorded in the ILR) was used instead.

Using this method, the actual end date was used if the apprenticeship completion status was recorded as ‘completed’ and the planned end date was used for other reasons: withdrawn, planned break, transferred and continuing. From the November 2018 release onwards, the methodology was changed so that only the planned end date is used in the calculation of apprenticeship durations.

In addition, we excluded from the calculation those learners who were returning to their apprenticeship after a break. Since these learners have prior attainment, it is expected that the duration of their apprenticeship on returning will be shorter compared to new starters. Including returning learners was therefore likely to reduce the average expected duration of apprenticeships. Returning learners are identified as those that have an original start date which is different to their learning start date; indicating they restarted their programme after a break.

As a result of these changes expected durations from November 2017/18 onwards are not directly comparable to earlier years. For example, expected duration for apprentices (excluding re-starters) was 581 days for the 2017/18 academic year compared to 551 days using the previous method.

Planned length of stay

Minimum durations were put in place for framework-based apprenticeships from August 2012. For learners aged 16 to 18 apprenticeships had to last at least 12 months. For learners aged 19 and over there was more flexibility, as some adults have prior learning/attainment and can therefore complete training more quickly. For example, if the training provider could provide evidence of prior learning the minimum duration was reduced to 6 months. For new apprenticeship standards the minimum duration is 12 months. These differences should be considered when comparing planned length of stay data between years.

From 2015/16, the methodology to calculate planned length of stay was revised to include those learners whose start date was the same as their planned end date. In 2014/15 this would have meant 100 learners included in the total for ’12 months or more’ would also have been included in total for ‘fewer than 12 months’. As this methodology change affected a relatively small number of learners, figures for previous years were not revised.

Employer Ownership Pilot collection

The Employer Ownership Pilot (EOP) offered employers in England direct access to public investment over the period of the pilot (2012 to 2016) to design and deliver their own training solutions.

The 2014/15 EOP collection was affected by the move to an improved collection system between provisional and final return dates. Although this move helped to put the final 2015/16 collection on a better footing, there were notable differences between provisional and final 2014/15 data. Rather than extend the considerable work with providers to reconcile these differences DfE took the decision to continue to use the provisional data, as it was almost complete and passed full quality assurance.

Furthermore, the impact on final figures was negligible as EOP numbers were relatively very small when compared to overall apprenticeship starts: in 2014/15, 1,500 apprenticeship starts out of a total of 499,900 were EOP and in 2015/16 this was 1,000 out of 509,400. The EOP was withdrawn in 2015/16.

Apprenticeship service measures

Commitments

Commitments show an intent for an apprentice to start.

A commitment is a potential apprentice, who is expected to go on to start an apprenticeship programme and is recorded on the apprenticeship service. Fully agreed commitments have agreement between the employing organisation and the training provider setting out how they will support the successful achievement of an apprenticeship. Otherwise, the commitment has a status of ‘pending approval’.

Levy-paying organisations have used the apprenticeship service since its introduction in May 2017. This is not the case for non-levy-paying organisations meaning the interpretation of the trends for total commitments and those of non-levy-paying organisations should be treated with some caution.

- Prior to January 2020 only non-levy organisations could set up apprenticeship service accounts and have commitments in order to utilise levy transfers, while a limited number of non-levy organisations registered accounts as part of testing in preparation for the extension of the service to all employers.

- On 9 January 2020, the Apprenticeship service was extended so that all employers that do not pay the levy could register and reserve funds.

- Since 1 April 2021, all new apprenticeship starts at both levy-paying and non-levy paying organisations were via the apprenticeship service.

Commitments may be recorded or revised on the Apprenticeship Service system after the training start date has passed. This means all commitments data should be treated as provisional and may be subject to further revision as more commitments are recorded on the apprenticeship service system. Data for previous academic years may also be revised as details are updated by employers. The data is only fully captured when providers confirm details in the Individualised Learner Record (ILR). In the interests of transparency, what is known at the point of reporting has been included where possible.

Transferred commitments

Transferred commitments are commitments where the transfer of apprenticeship levy funds from the account of a levy-paying organisation to another apprenticeship service account has taken place.

As outlined in the funding section, the proportion of funds which can be transferred between accounts has changed since they were introduced in 2018. From April 2018 organisations could transfer up to 10% of the annual value of funds entering their apprenticeship service account. This limit was increased to 25% in April 2019 and in April 2024 it increased again, to 50%. These changes should be considered when interpreting changes to the number of transferred commitments over time.

Transferred commitments where a corresponding apprenticeship start has been recorded on the ILR are identified by matching the Commitment ID between the Apprenticeship service record and the corresponding ILR record.

However, providers may not record learners immediately on the ILR, so a lag may occur between a commitment being recorded in the apprenticeship service and the corresponding commitment being recorded as a start on the ILR.

Additionally, as commitments can be recorded or amended on the apprenticeship service system after the transfer approval date has passed, all data should be treated as provisional. Data is only fully captured when providers confirm the details in the ILR.

Apprenticeship service reservations

Levy-paying training providers can make reservations on behalf of non-levy paying organisations. Additionally, providers with reservations that have progressed to a ‘commitment’ (with a training provider assigned) are counted as supporting.

As outlined in the funding section, this reservation is in advance of recruitment or an offer of an apprenticeship being made to an existing employee. The reservation ensures that employers can plan training and that funds will be available to pay for the training from the point the apprenticeship starts. This allows non-levy paying employers to access the benefits of the system and reserve funds to support their training.

It should be noted:

- until autumn 2020 employers who did not pay the apprenticeship levy were able to access apprenticeship training either through a provider with an existing Government contract or via the apprenticeship service. From April 2021, all new apprenticeship starts are managed through the apprenticeship service. This means comparisons of reservations before and after these periods should be treated with caution.

- If the reservation expires before the apprentice starts, a new reservation has to be made.

Further Education and Skills

Treatment of component aims (September 2025)

The underlying files listed below were adjusted to correct the level assigned to some aims in the Learning Aim Reference Service, which we use as a source. This affected the academic years 2022/23 and 2023/24, and the provision type “Education and Training”.

The main impact could be seen at level 3 where the learner participation figure for 2022/23 was revised upwards from 121,330 to 140,790. For 2023/24 the level 3 figure was revised upwards from 120,580 to 142,450. For learner achievements, the 2022/23 level 3 figure was revised from 76,220 to 86,950 and for 2023/24 from 74,630 to 88,950.

| The following files were corrected : |

|---|

| Deprivation - Participation by Age, Learner Home Deprivation, Provision Type |

| Headline - Full year - Participation, Achievement by Provision type |

| Headline - In year - Participation, Achievement by Provision type |

| Learner characteristics - Participation, Achievement by Age, LLDD, Primary LLDD, Ethnicity, Provision Type |

| Learner characteristics - Participation, Achievement by Age, Sex, Detailed Ethnicity, LLDD, Provision Type |

| Provider - Education and Training Participation by Provider Name, UKPRN, Level |

| Geography Region, LA, LAD, PCON - Participation, Achievement (volumes and indicative rates per 100,000 population) |

| Underlying charts data - full year |

Changes introduced for 2024/25

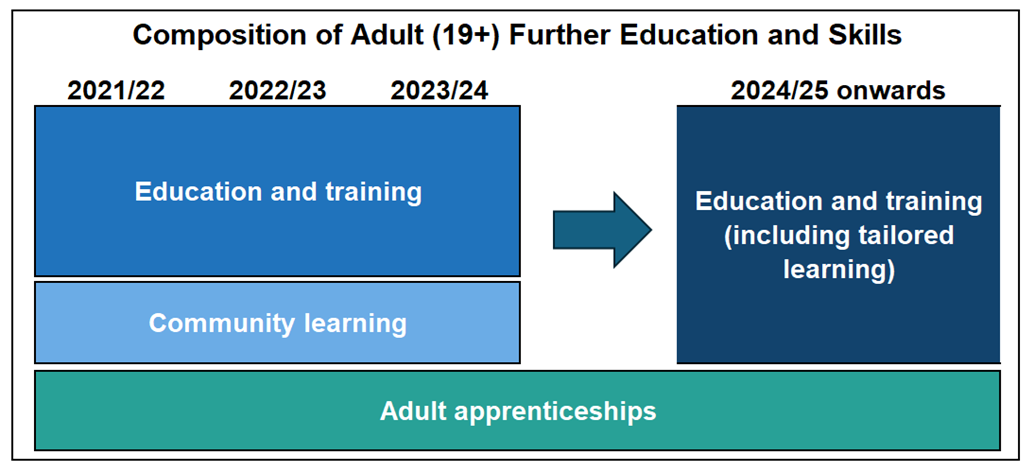

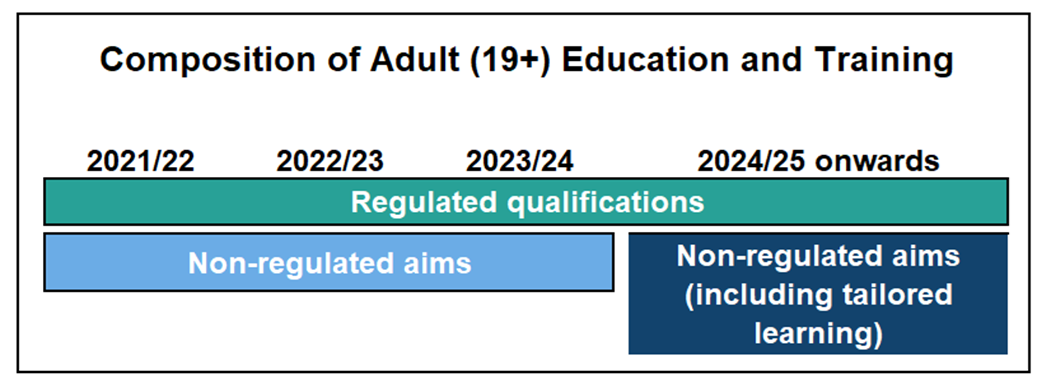

A number of significant changes have been introduced for the 2024/25 academic year which affect how FE and skills data is defined and reported. Information on these changes is provided in section 2 of this methodology.

Changes made to final 2023/24 data

The following two changes were included in final 2023/24 data published in November 2024 and will continue to be used going forwards.

GCSE enrolments and achievements for education and training data (November 2024)

All GCSEs are counted as level 2 enrolments, but only passes at grades 4 to 9 (or A* to C) are counted as level 2 achievements. Grades 1 to 3 (or D to G) and unclassified are counted as level 1 achievements. This method of counting GCSE enrolments and achievements was introduced for final 2023/24 education and training and will be used for all future publications. Previously, a GCSE pass at any grade (1 to 9 or A* to G) was counted as a level 2 achievement.

Time series data published from November 2024 onwards is based on the new method.

Update to English and Digital Essential Skills (November 2024)

During development work, in preparation for introducing the changes to FE data for the 2024/25 academic year, we identified several non-regulated aims which were flagged as English essential skills, but according to their learning aim titles and descriptions should instead have been flagged as digital skills.

We corrected this error for final 2023/24 data and also provided a revised timeseries. The table below shows revised adult (aged 19+) essential skills participation in English and digital skills and the data without the revision.

| Year | English (old count | English (revised) | % difference | Digital Skills (old count) | Digital Skills (revised) | % difference |

|---|

| 2020/21 | 243,640 | 243,640 | - | 5,150 | 5,150 | - |

| 2021/22 | 239,160 | 235,010 | -1.7 | 17,590 | 21,870 | +24.3 |

| 2022/23 | 222,990 | 218,080 | -2.2 | 17,510 | 22,220 | +26.9 |

| 2023/24 | 203,680 | 195,940 | -3.8 | 15,760 | 24,120 | +53.0 |

Source: ILR (SN14)

Note: the revised aims first became available to learners in the 2021/22 academic year, therefore figures for 2020/21 are not affected.

Since participation in digital skills is relatively low compared to English, the revision has led to a substantial percentage increase in digital skills participation compared to the old count. By contrast English participation has seen a modest fall.

Going forward we will use the revised version of the data as the headline measures for English and digital essential skills. A full list of the revised qualifications is provided in annex 5 ‘Learning aims now counted as Digital Skills’; these are the qualifications which are now counted as digital skills, but were previously counted as English.

Further Education and Skills during the COVID-19 pandemic

The period affected by the COVID-19 pandemic (particularly March 2020 to March 2022) and the associated restrictions, impacted FE provision and provider reporting behaviour via the ILR. Extra care should be taken when comparing between academic years and interpreting data for this period. For example, the overall number of achievements reported in 2019/20, 2020/21 and 2021/22 was lower compared to the years immediately before and after, in part due to disruption to exams and assessments, and breaks in learning during the pandemic.

Full level 2 and Full level 3 methodology in 2016/17

In 2017, the EFSA reviewed the classification of level 2 and 3 qualifications for the 19-23 funding entitlement, based on the recommendations in the Wolf Review of Vocational Qualifications.

The review led to some full level 2 and full level 3 qualifications being reclassified as level 2 and level 3, respectively.

As a result of these changes the number of learning aims (qualifications) designated as full level 2 and level 3 decreased substantially in 2016/17. For example, between August 2016 and April 2017, 138,100 learners were reclassified to level 2 from full level 2 and 2,800 learners were reclassified to level 3 from full level 3

The revised methodology aligned FE statistics more closely with the 16 to 19 Performance Tables in terms of the qualifications included.

A full level 3 qualification is equivalent to 2 or more A levels and a full level 2 qualification 5 GCSEs or more at grade 9 to 4 ( A* to C).

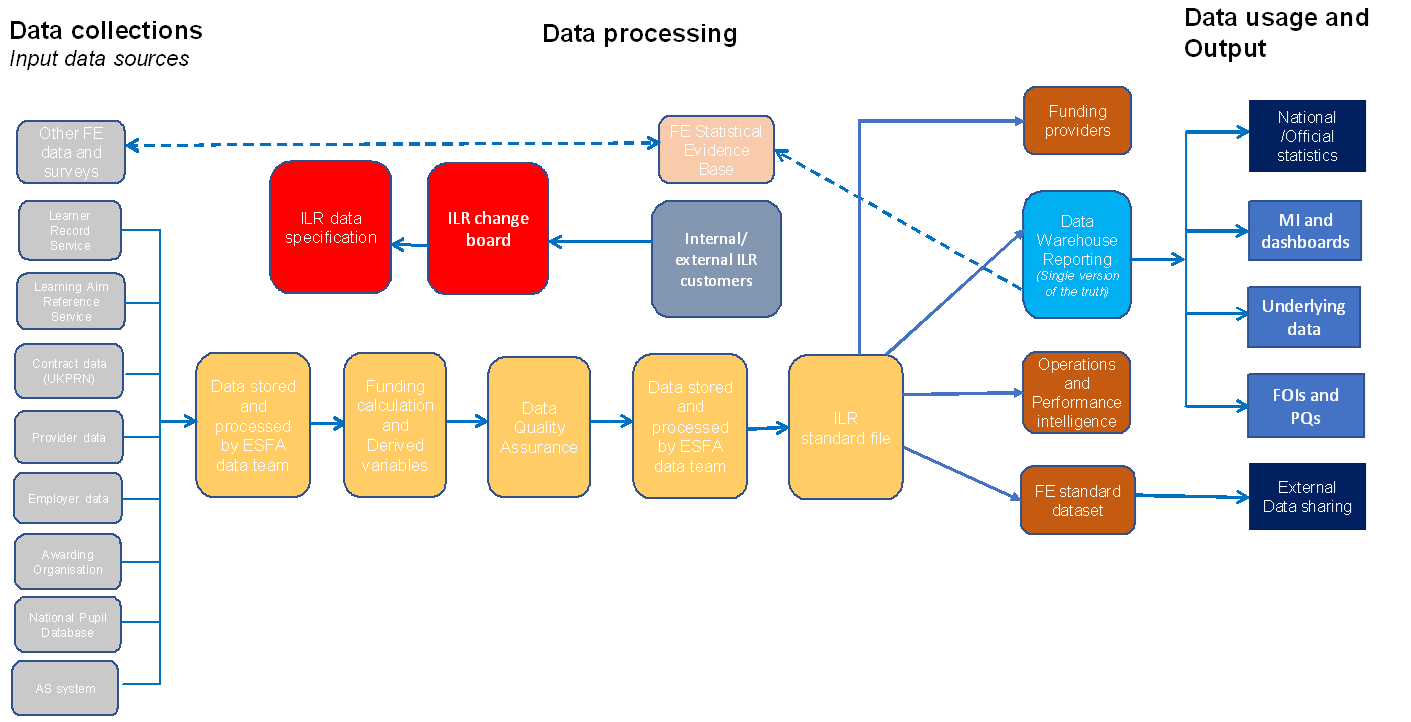

ILR Data

Introduction of the single ILR

In the 2011/12 academic year, a Single ILR (SILR) data collection system was introduced. This replaced the multiple separate data collections used in previous years and introduced small technical changes in the way learners from more than one funding stream are counted.

Overall, the new collection system led to a removal of duplicate learners and accounted for an approximate 2% reduction in total learner participation in 2011/12 compared to a year earlier. Apprenticeship participation figures were more significantly affected however, since each apprenticeship is counted, rather than individual learners. For example, where a learner participates on more than one apprenticeship programme, each is counted. The removal of duplicated learners accounted for an approximate 5% reduction in the number of apprenticeships. FE participation figures from 2011/12 onwards therefore are not directly comparable to earlier years.

Detailed information on the ILR (opens in new tab) collection is published by DfE.

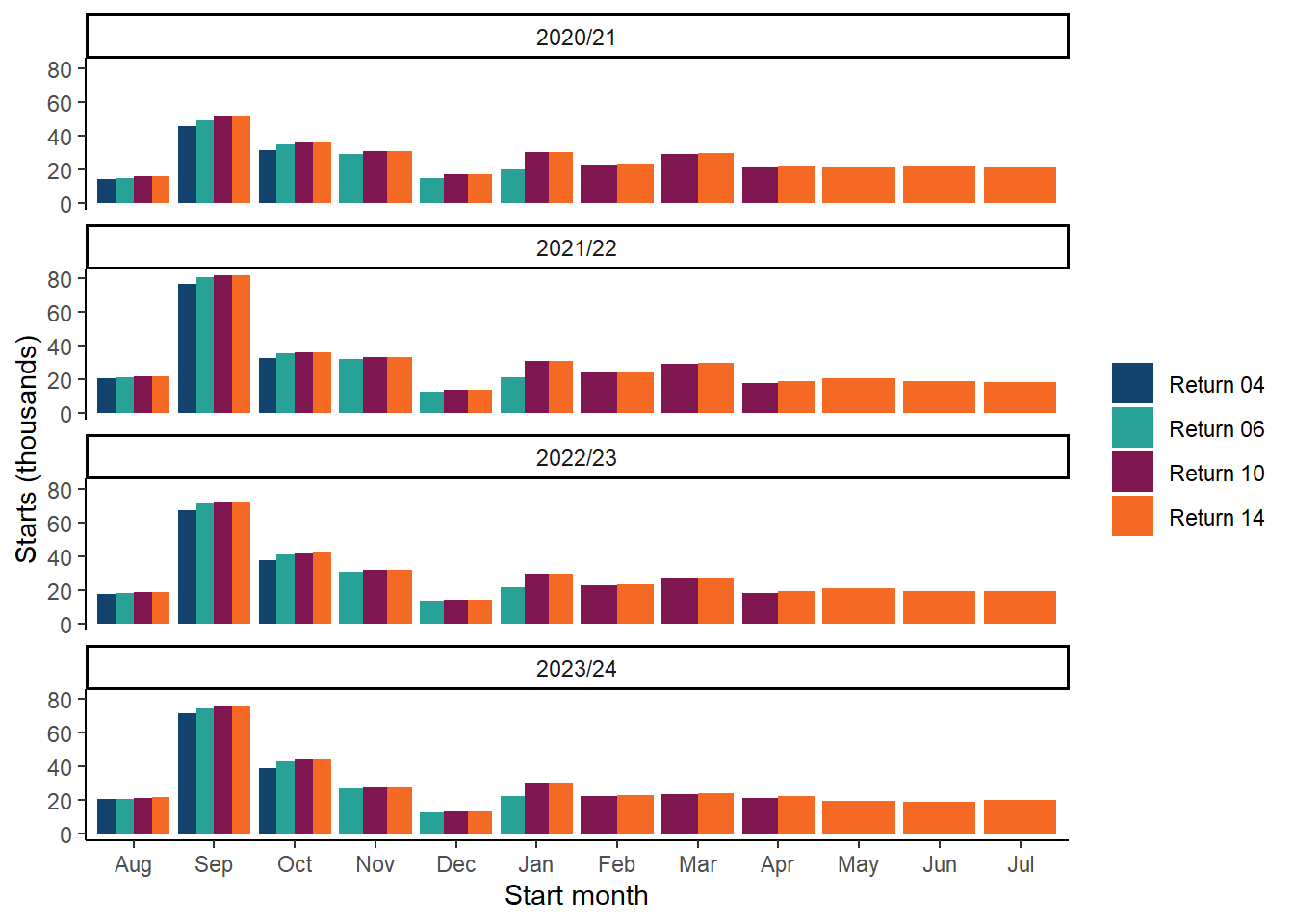

Using in-year ILR data

Updates of FE statistics throughout the year provide users with the latest data and help the department make timely assessments on the effectiveness of its policies.

However, it should be noted that in-year data for updates is taken from an operational information system, the ILR, that is primarily designed to support the funding of FE providers. As a result, there are some limitations to in-year data, which users should take into consideration:

In-year data is subject to data lags, as providers may update learner records throughout the year as new information becomes available. The size of revisions to estimates that arise from data lag can vary greatly. They tend to be around 2 to 3 per cent but can be as much as 20 per cent. Revisions are typically upward though it should be noted that downward revisions are possible.

Data lag from one year to the next is not predictable, as provider behaviour changes over time and there is no source of information that would enable the calculation of a robust estimate on the completeness of the data that has been returned.

However, we do undertake a quality assessment of the data providers have returned. If we consider estimates to be particularly weak, due to data lag or any other factor, we may defer publication of data. In addition we encourage providers to submit timely data returns throughout the year, ahead of the final collection in October.

The publication of statistics each November for the full academic year (August to July) provides a complete account of FE participation and achievement. We therefore recommend users reference this final version of the data, when making comparisons between years.

More information on data lag is provided under ‘Timeliness and Punctuality’ within the of the Quality section of methodology.

Geographical breakdowns

Local authorities

Reorganisation of local authorities means that it is not always possible to make direct comparisons for local areas between years.

Recent reorganisations include:

- On 1 April 2019 the three unitary authorities of Dorset, Bournemouth and Poole were reorganised into two new authorities; Dorset and Bournemouth, Christchurch & Poole.

- On 1 April 2020 Wycombe, South Bucks, Chiltern and Aylesbury Vale district councils and Buckinghamshire County Council were replaced by Buckinghamshire Council unitary authority.

- On 1 April 2021 Northamptonshire was reorganised into two new unitary authorities, North Northamptonshire and West Northamptonshire.

- On 1 April 2023 Cumbria was reorganised into two new unitary authorities, Cumberland and West Morland and Furness.

- On 1 April 2023 Craven, Carlton, Hambleton, Harrogate, Richmond, Scarborough and Selby district councils were replaced by North Yorkshire Council.

Local Enterprise Partnerships

The geographical areas covered by Local Enterprise Partnerships (LEPs) can overlap, meaning some areas fall under two LEPs. Prior to the January 2023 statistics publication, LEPs were reported based solely on the primary LEP code of the learner. Since January 2023, we have identified LEPs using both primary and secondary LEP codes. This means that learning taking place in areas covered by two overlapping LEPs is now reported against both the LEPs rather than just the LEP derived from the primary code. We have applied this new method to the historic LEP data included in this publication and updated the time series.

Provider Type

In November 2025, following user feedback, we re-aligned the categories used in our provider type breakdowns.

- A new category for local authorities has been introduced.

- What remains in the “Other” category will include HE, NHS Trusts, Military, Govt departments, and other public bodies.

A more detailed breakdown, including individual providers, can be found in our supporting files.

Footnotes

In addition to the information provided here, footnotes are provided for each data file we produce and are listed within the Apprenticeships and FE and Skills data guidance sections. The footnotes explain key features of the statistics to users and highlight any limitations and considerations regarding the data. Each chart and table featured in the publications on Explore Education Statistics is accompanied by the associated footnotes.